Batch calculation of P/L and risk metrics cost tens of millions a year. These grid-computing costs are driven by the combination of complex valuation models and large portfolios of structured products. Despite this large investment in computing resources, the results are barely acceptable. The risk sensitivities reflect conditions at the close of business and quickly become stale under volatile market conditions. Trading performance is not accessible in real time while the market is open. And with increasingly stringent regulation, the cost of calculations is only going to increase.

But it doesn’t have to be this way.

Riskfuel can dramatically accelerate these calculations. We use machine learning to make a fast copy of the in-house model. From the outside, the Riskfuel model looks like the in-house model since it takes in the same input parameters and produces the same results. Internally however, the complex simulation has been replaced by very quick DNN inferencing. The calculations are performed in a fraction of the time using less than 1% of the compute cost of the in-house version. What once used to take a data center full of servers all night long to calculate, can now be achieved with a handful of servers in minutes. Turbocharge your risk management AND reduce your computing costs at the same time with Riskfuel!

Riskfuel AI Models Match Your Existing API Interfaces

(No proprietary systems, expensive consultants, or extensive infrastructure plumbing required)

Riskfuel models match the API of your existing models: they take the same inputs, return the same outputs, and are completely interchangeable with your existing models. There are no proprietary systems, no new tools to learn, and no consultants to hire. We’ll give you the Riskfuel model in the same format as the existing in-house model ready for you to deploy it the same way you deploy any other model. The model inputs and outputs match your existing model, facilitating switching between your old model and the new model.

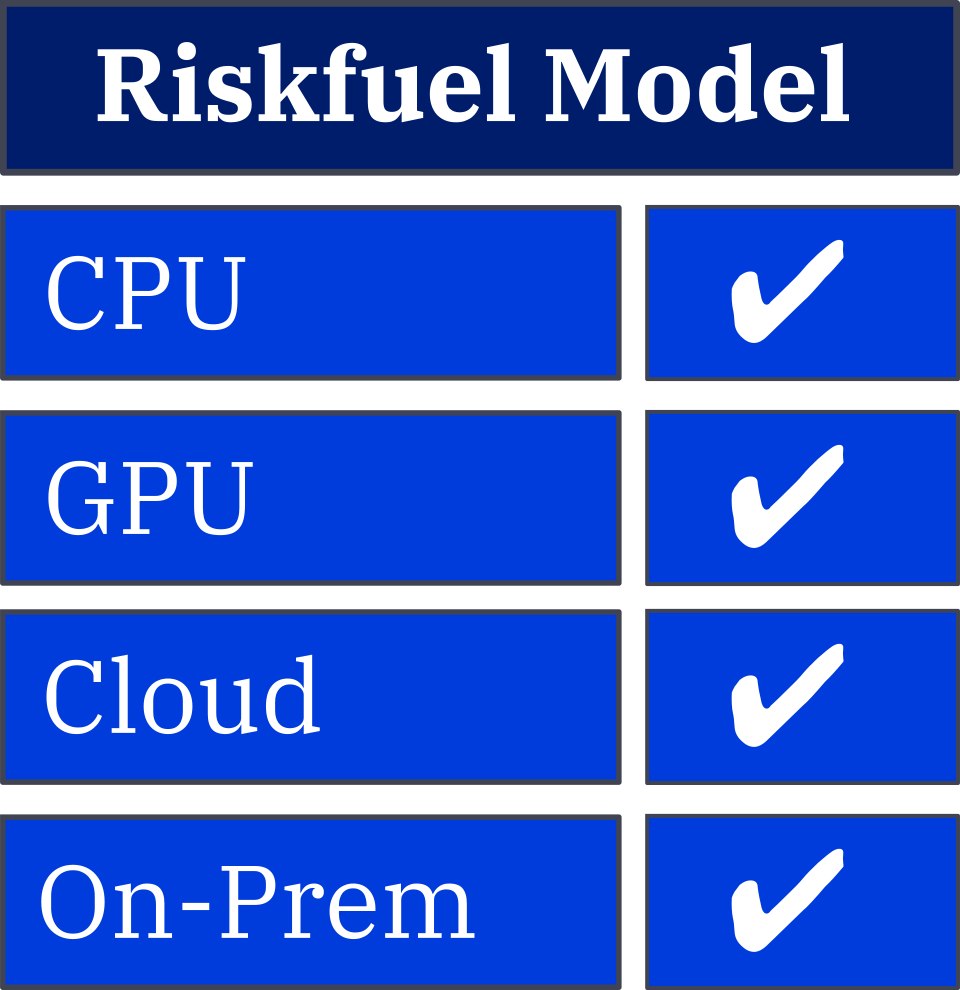

Riskfuel Models are Flexible

CPU or GPU, On-premise or Cloud

Riskfuel models can be deployed on CPU or GPU, on Windows or Linux, and have been designed to work seamlessly in the Cloud. Riskfuel models can run anywhere on anything.

Models can be Deployed One-by-One

Start with a Risk-Free Pilot

You have hundreds of models running in your daily batch jobs, and upgrading them all at once would be a large, risky IT project. We recommend starting out with a single model: accelerate it, validate it, and deploy it. We’ll develop an accelerated version of that model, demonstrating the extreme speed-ups that can be achieved with the Riskfuel approach. You will be able to see just how easy it is to deploy Riskfuel models in your own environments, and we can work together to fix any road bumps before applying our Riskfuel method to the rest of your batch-processed portfolio.

Try Out Our Demo Pricer

Riskfuel

1,000,000x